Facial Tracking

Extension introduction

XR_HTC_facial_tracking allows developers to create an application with actual facial expressions on 3D avatars.

Supported Platform and Devices

| Platform | Headset | Supported | Plugin Version | |

| PC | PC Streaming | Focus 3/XR Elite/Focus Vision | V | 2.0.0 and above |

| Pure PC | Vive Cosmos | X | ||

| Vive Pro series | V | 2.0.0 and above | ||

| AIO | Focus 3/XR Elite/Focus Vision | V | 2.0.0 and above | |

Enable Plugins

- Edit > Plugins > Search for OpenXR and ViveOpenXR, and make sure they are enabled.

- Edit > Plugins > Built-in > Virtual Reality > OpenXREyeTracker

- Note that the " SteamVR " and " OculusVR " plugin must be disabled for OpenXR to work.

- Restart the engine for the changes to take effect

How to use OpenXR Facial Tracking Unreal Feature

- Please make sure ViveOpenXR is enabled

- Edit > Project Settings > Plugins > Vive OpenXR > Click Enable Facial Tracking under Facial Tracking to enable OpenXR Facial Tracking extension.

- Restart the engine to apply new settings after clicking Enable Facial Tracking .

- For the available FacialTracking functions, please refer to ViveOpenXRFacialTrackingFunctionLibrary.cpp.

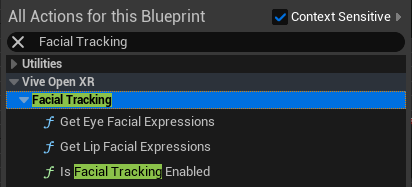

-

Type

Facial Tracking

to get the

Facial Tracking

blueprint functions your content needs.

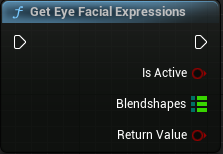

-

Get Eye Facial Expressions

Provides the blend shapes of the eyes. -

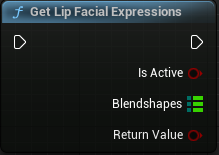

Get Lip Facial Expressions

Provides the blend shapes of the lip.

-

Get Eye Facial Expressions

Play the sample map

- Make sure the OpenXR Facial Tracking extension is enabled, the setting is in Edit > Project Settings > Plugins > Vive OpenXR .

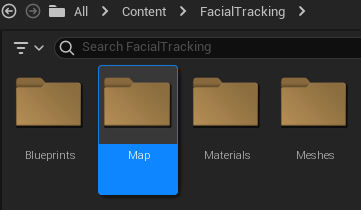

-

The sample map is under

Content

>

FacialTracking

>

Map

.

- Start playing the FacialTracking map, you can see the avatar's expression change.